Translating Digital Silence into Biological Resonance

PALOVUE EarSound Wireless Earbuds

We accept it as a mundane reality that we can walk down a bustling city street while simultaneously experiencing the acoustic exactness of a pristine recording studio. Yet, the physical processes required to bridge the gap between a silent digital file stored on a solid-state drive and the biological mechanical vibrations inside the human cochlea are staggeringly complex. The modern untethered earbud is not merely a speaker; it is a high-frequency radio transceiver, an advanced digital signal processor, a sophisticated chemical energy storage unit, and an electro-acoustic transducer, all condensed into a chassis weighing less than a standard sheet of paper.

By dissecting the engineering choices embedded in a contemporary device like the PALOVUE EarSound—which relies on Bluetooth 5.3, AAC/SBC codec translation, 6mm dynamic drivers, and neural network noise reduction—we can move past consumer specifications and uncover the foundational laws of physics and biology that govern personal audio.

When a Crowded Subway Becomes a Private Concert Hall

The journey of sound begins with a leap across the electromagnetic spectrum. To deliver an uninterrupted stream of data to a moving target, engineers must navigate one of the most hostile environments in consumer technology: the 2.4 GHz Industrial, Scientific, and Medical (ISM) radio band.

This specific slice of the spectrum is a lawless frontier, heavily populated by Wi-Fi routers, microwaves, IoT sensors, and dozens of other smartphones. Transmitting fragile audio data through this invisible tempest requires aggressive architectural strategies. The implementation of Bluetooth 5.3 relies fundamentally on an advanced iteration of Frequency-Hopping Spread Spectrum (FHSS).

Rather than transmitting on a single static frequency—which would be instantly obliterated by local interference—the transceiver rapidly switches its carrier frequency across 79 distinct 1 MHz channels. It performs this hop 1,600 times per second. However, mere randomized hopping is insufficient in dense urban environments. Bluetooth 5.3 integrates sophisticated Channel Classification algorithms. The host device and the earbuds are in constant communication regarding the signal-to-noise ratio (SNR) of every channel. If a specific frequency overlaps with a nearby Wi-Fi router broadcasting heavily, the protocol dynamically flags that channel as "bad" and mathematically removes it from the hopping sequence.

This is the hidden mechanism behind the "quick and stable transmission" noted in devices like the PALOVUE EarSound. It is not just about signal strength; it is about predictive evasion. By continuously adapting the map of available frequencies, the system prevents packet collisions before they occur, ensuring that the digital payload arrives at the earbud's receiver with zero dropped frames, maintaining the illusion of a solid wire where none exists.

Why Do 6 Millimeters Sound Like a Symphony?

Once the digital payload arrives intact, it must be converted from binary data into fluctuating electrical currents, and finally into physical waves of varying atmospheric pressure. This heavy lifting is performed by the electro-acoustic transducer, commonly known as the driver.

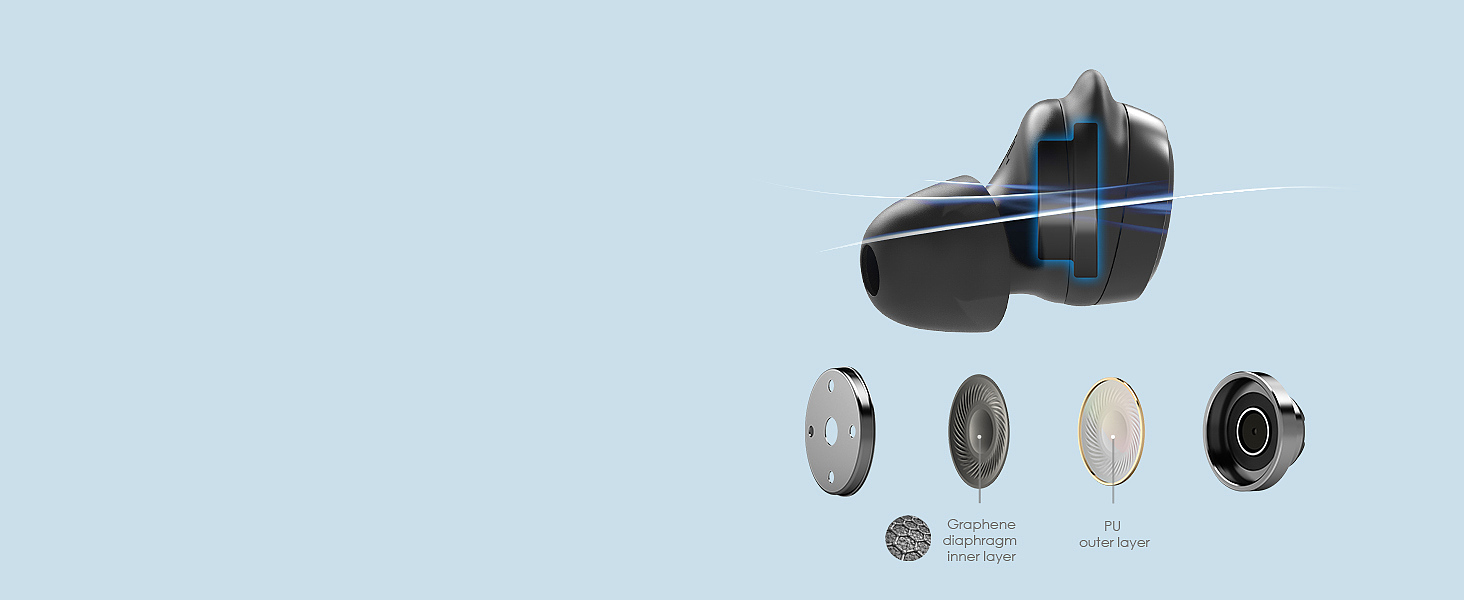

In a highly compact chassis, the diameter of this driver is severely restricted. A 6mm dynamic stereo driver is a study in precise mechanical compromises. The fundamental physics of sound dictate that low-frequency audio (bass) requires the displacement of a large volume of air, which typically requires a large surface area. To generate profound bass from a surface only 6 millimeters across, the driver must rely on increased linear excursion—meaning the diaphragm must travel further forward and backward within the acoustic chamber.

This movement is governed by the Lorentz force. A microscopic voice coil, usually wound from ultra-fine copper-clad aluminum wire (CCAW) to minimize mass, is suspended within the magnetic gap of a permanent magnet (typically a high-flux neodymium alloy). When the alternating current of the audio signal passes through this coil, it generates an oscillating electromagnetic field that interacts with the permanent magnetic field, violently driving the attached diaphragm back and forth.

The material science of that diaphragm is the critical variable. If the material is too heavy, its own inertia will smear transient responses; a sharp snare drum hit will sound sluggish. If it is too flimsy, the surface will ripple and deform at high frequencies, a phenomenon known as "breakup," which introduces harsh harmonic distortion. Engineers must select polymers or composite materials that maintain perfect pistonic motion—moving uniformly across their entire 6mm surface—while being light enough to stop instantly when the signal ceases. The acoustic tuning of the chamber behind this driver manages the back-wave air pressure, preventing it from choking the diaphragm's movement and allowing those deep bass notes to materialize from such a tiny origin point.

Raw Data vs. Human Perception: The Codec Compromise

Even with a perfect wireless connection, a bandwidth bottleneck exists. An uncompressed CD-quality audio stream requires roughly 1,411 kilobits per second (kbps). Standard Bluetooth profiles cannot reliably sustain this throughput without severely impacting battery life and stability. Therefore, the data must be compressed. This is the domain of the codec (coder-decoder), and it relies not on physics, but on the biological quirks of the human brain.

Codecs like Advanced Audio Coding (AAC) and the Subband Codec (SBC) operate on the principles of psychoacoustics—the study of how humans actually perceive sound. The human ear is not an objective microphone; it is a highly biased biological sensor.

One of the primary tools these codecs use is "auditory masking." If a loud sound and a quiet sound of similar frequencies occur simultaneously, the mechanical properties of the basilar membrane inside the inner ear, combined with neural processing, cause the brain to completely ignore the quieter sound. The AAC codec analyzes the incoming audio data in real-time, mathematically models the limitations of human hearing, and aggressively deletes the data for the masked sounds.

Why transmit data that the brain will literally refuse to hear? While SBC is a mandatory, utilitarian codec designed for low computational overhead, AAC uses highly complex perceptual models to achieve superior frequency resolution at similar bitrates. By supporting AAC, systems ensure that the translation from massive raw files to compressed Bluetooth streams discards only the invisible acoustic shadows, preserving the dynamic highs and profound bass that construct the perceived fidelity of the track.

Erasing Sound to Hear More Clearly

Delivering audio to the user is only half the equation; capturing the user's voice and delivering it to someone else across a noisy environment is an entirely different signal processing nightmare. In a traditional handset, the microphone rests centimeters from the mouth. In untethered earbuds, the microphones are located by the tragus of the ear, capturing ambient wind, traffic, and background chatter almost as efficiently as the user's voice.

Historically, dual-microphone setups relied on basic phase cancellation. Sound arriving at the forward-facing microphone slightly before the rear-facing microphone could be isolated based on the time-of-flight difference, creating a directional "beam." However, this struggles against complex, unpredictable noises bouncing off urban surfaces.

Modern implementations utilize Neural Network Noise Reduction. This is a radical departure from traditional algorithmic filters. Instead of using rigid mathematical thresholds to determine what is "noise" and what is "voice," the system relies on a machine learning model that has been trained on tens of thousands of hours of diverse audio data. The neural network learns the specific spectral footprint of the human vocal tract—the harmonic structures of vowels, the transient bursts of consonants, and the typical cadence of speech.

When a user speaks into a device equipped with this technology, the onboard processor rapidly analyzes the incoming waveform. The neural network identifies the components of the signal that match human speech and ruthlessly subtracts the rest of the acoustic energy in real-time. It doesn't just block noise; it computationally extracts the voice from the surrounding acoustic chaos, ensuring clear call performance even when the physical environment is hostile to communication.

The Chemical Reservoir in Your Pocket

None of this processing, transceiving, or physical actuation can occur without a stable flow of electrons. The limitation of untethered design is absolute reliance on internal energy storage. Achieving a 32-hour cumulative lifecycle in a system where the primary active nodes weigh only 4 grams each is a testament to the maturation of lithium-ion electrochemistry and aggressive power state management.

Within the minuscule cavity of a 4-gram earbud sits a high-density lithium coin cell or pouch cell. During operation, lithium ions migrate from the graphite anode through a separator soaked in liquid electrolyte to the metal oxide cathode, releasing a steady stream of electrons to power the Bluetooth System-on-Chip (SoC) and the amplifier.

Because the volumetric constraints inside the earbud limit this cell to a capacity of perhaps 30-50 milliampere-hours (mAh)—yielding roughly 6 hours of continuous discharge—the system relies on a symbiotic relationship with its charging case. The 28g case acts as a master reservoir, housing a significantly larger battery.

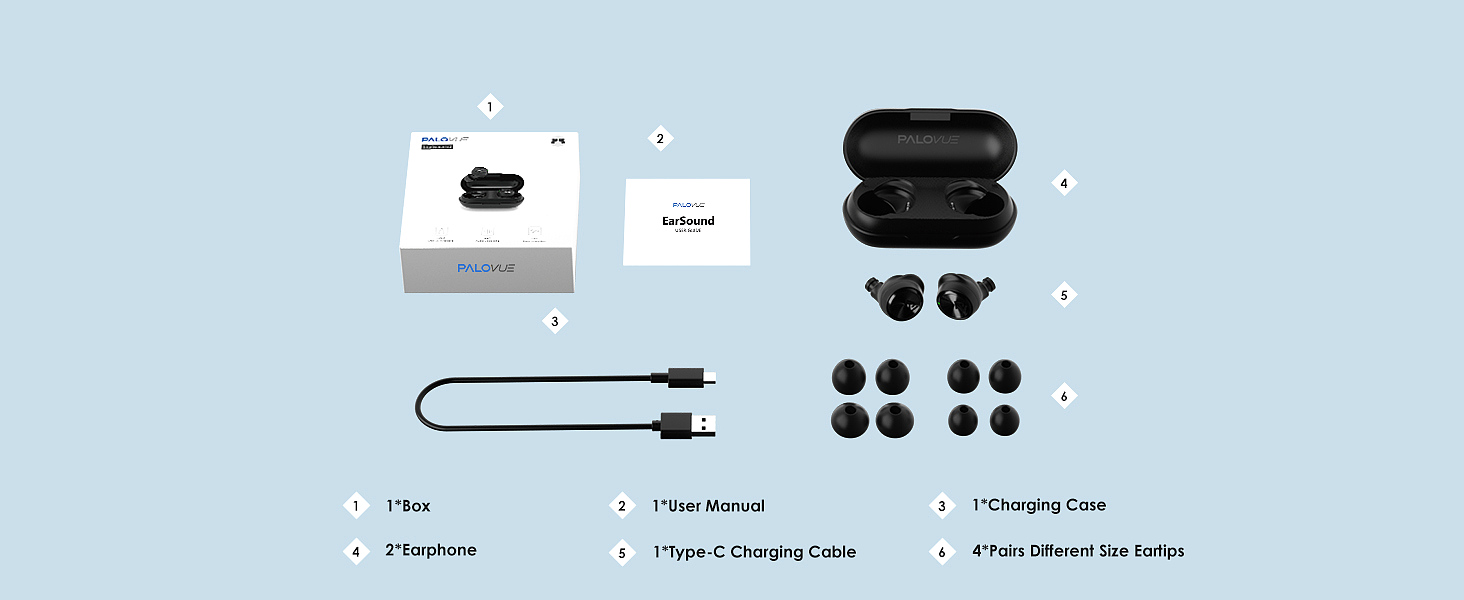

The electrical engineering magic lies in the Power Management Integrated Circuits (PMIC). When the earbuds drop into the case, contact pins establish a connection. The PMIC meticulously controls the voltage and current flowing into the delicate earbud cells. It must charge them fast enough to be convenient, but carefully enough to prevent lithium plating (which degrades capacity) or thermal runaway. This tiered architecture—a small, highly active chemical cell frequently replenished by a larger, dormant reservoir—is the only way to reconcile the conflicting demands of ultra-lightweight ergonomics and all-day operational endurance.

Sealing the Canal Against the Elements

The final interface is purely physical. The most advanced digital-to-analog converter and the most perfectly tuned dynamic driver are rendered useless if acoustic coupling with the human ear is compromised.

An earbud operating in the open air cannot produce meaningful bass. Low-frequency sound waves wrap around the device and cancel themselves out in a process known as dipole phase cancellation. To generate deep bass, the earbud must turn the ear canal into a sealed, pressurized acoustic chamber. This is why ergonomic design and the inclusion of various silicone eartips are not mere accessories, but fundamental acoustic components. The silicone must deform to match the unique topography of the user's ear canal, creating an airtight seal that traps the air mass, allowing the 6mm driver to push and pull directly against the eardrum.

Simultaneously, this precise physical fit must be defended against the environment. The biological reality of human exertion (sweat) and external weather (rain) introduce highly conductive, corrosive fluids to sensitive electronics.

An IPX5 waterproof rating defines a specific survival threshold under the IEC 60529 standard. It dictates that the enclosure must protect the internal PCB, battery, and transducer against low-pressure water jets from any direction (12.5 liters per minute through a 6.3mm nozzle). To achieve this, the seams of the ultrasonically welded plastic chassis are lined with precision-cut silicone gaskets. The acoustic ports, which must allow air to flow so sound can escape, are covered with microscopic hydrophobic meshes. These meshes utilize surface tension physics; the pores are large enough for air molecules to pass freely, but small enough that the cohesive forces of water molecules prevent liquid droplets from breaching the barrier.

Ultimately, the creation of a modern wireless audio device represents a massive convergence of disciplines. It forces radio frequency engineers, material scientists, data theorists, and fluid dynamicists to collaborate within a space measured in millimeters. The result is a device that seamlessly translates abstract digital logic into the deeply intimate, biological experience of sound.

PALOVUE EarSound Wireless Earbuds

Related Essays

TOZO T6 True Wireless Earbuds: Immersive Sound & Unbreakable Connection

Symphonized SNRGY True Wireless Earbuds: Experience Natural Sound, Unplugged

Donerton Q20 Pro Wireless Earbuds: The Science of IPX7 Immersion Protection

The Invisible Filter: How ENC Makes Your Voice Clear in Chaos

The Invisible Engineering: Why You Stop Noticing Wireless Earbuds Work

Silencing the Metropolis: Adaptive Waveforms and Acoustic Isolation

Decoding Acoustic Compromises in Micro-Audio Devices: A Hardware Autopsy

Kinetic Audio: The Physics of Sound in Motion

The Engineering of Independence: Deconstructing the Ltinist BX29 Wireless System