The Science of Wireless Audio: How Your Earbuds Create Immersive Sound - TEST

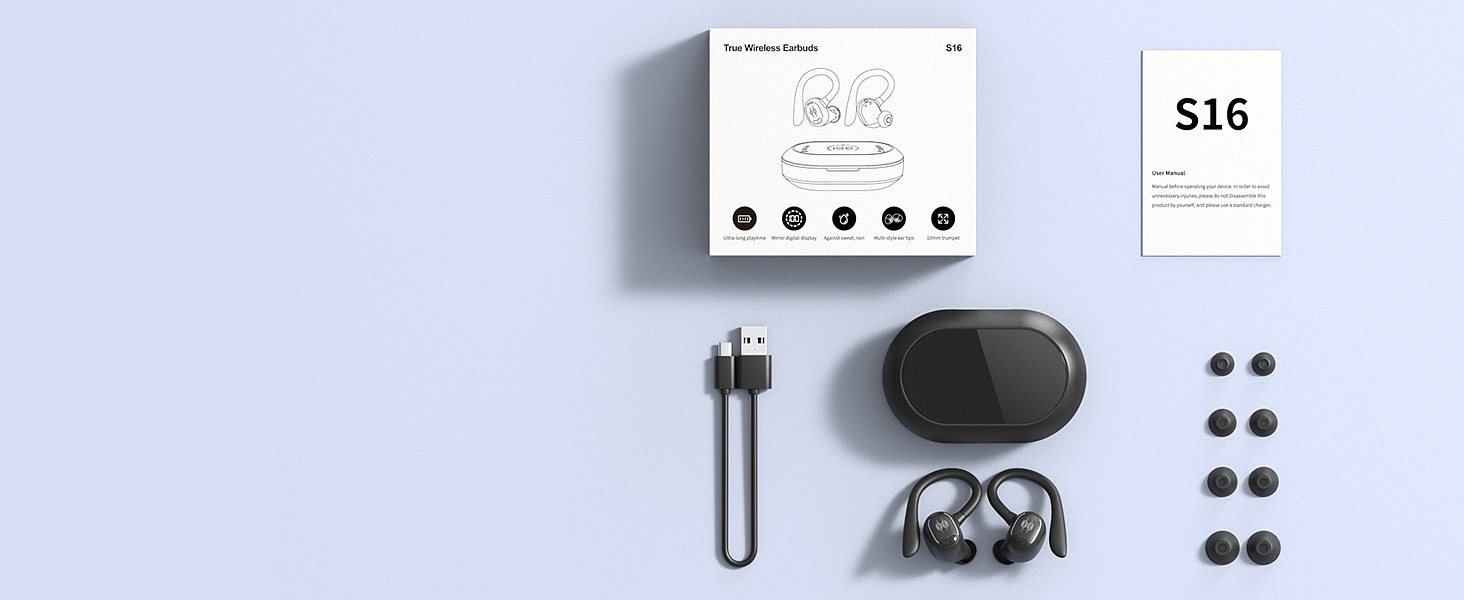

PSIER S16 Wireless Earbuds

You press play. A song fills your head, seemingly from nowhere. No wires. No speakers. Just two small pieces of plastic, each smaller than a jellybean, conjuring an entire orchestra inside your skull. Wireless earbuds have become so commonplace that we rarely stop to ask a deceptively simple question: how does music actually travel from a digital file on your phone to the immersive soundscape you perceive in your mind?

The answer is a chain of translations, each one a minor miracle of engineering. At every stage, something is lost, transformed, or approximated, and yet the final result can move us to tears. Understanding this chain changes how you hear everything.

The Invisible Data Stream: How Bluetooth Carries Your Music

Before sound can exist, data must move. When you tap play on your phone, the device locates the audio file, decompresses it, and begins a negotiation with your earbuds that most people never think about. This negotiation determines the codec, the algorithmic language your phone and earbuds will use to communicate.

Bluetooth audio follows the A2DP specification, a standard maintained by the Bluetooth Special Interest Group. Think of it as a postal system for sound: your phone encodes audio into packets, transmits them over 2.4GHz radio waves, and your earbuds reassemble and decode them, all happening roughly 300 times per second.

The choice of codec matters enormously. SBC, the mandatory baseline codec, operates at up to 328 kbps and provides perfectly functional audio. AAC, favored by Apple devices, uses more sophisticated psychoacoustic modeling to decide which frequencies the human ear can afford to lose. aptX, developed by Qualcomm, reduces latency, the delay between pressing play and hearing sound, to levels that make video watching tolerable. And LDAC, Sony's contribution, pushes bitrates up to 990 kbps, inching closer to the quality of wired connections.

But here is the uncomfortable truth that audio engineers acknowledge: in rigorous blind tests, most listeners cannot reliably distinguish between a well-encoded 320 kbps stream and an uncompressed original. The codec battle is real, but the differences exist at the margins of perception, not in the center of it. What matters far more is everything that happens after the data arrives.

Inside the Driver: Turning Electricity Into Sound

Once decoded, the audio signal is still just electrical voltage. Converting that voltage into the pressure waves we call sound requires a transducer, and in most wireless earbuds, that transducer is a dynamic driver.

A dynamic driver is an elegant machine in miniature. At its center sits a diaphragm, a membrane typically made from PET plastic, bio-composite materials, or beryllium-coated film. Attached to this diaphragm is a voice coil, a cylinder of copper wire. Surrounding the coil is a permanent magnet. When the amplified audio signal flows through the voice coil, it generates a fluctuating magnetic field. This field pushes against the permanent magnet's field, causing the voice coil to move, and with it, the diaphragm. The diaphragm's movement displaces air, and those displacements are what your ear detects as sound.

The physics here is deceptively simple but fiendishly difficult to execute well in a space the size of a thumbnail. Larger drivers generally produce better bass because they can move more air per cycle and operate at lower resonant frequencies. Earbud drivers typically range from 8mm to 14mm in diameter, which means engineers must work within severe constraints. Creating impactful bass from a driver this small requires clever acoustic engineering: tight ear seals, tuned bass ports, and digital signal processing that compensates for the physical limitations of small membranes.

Driver materials matter too. Bio-cellulose diaphragms, like those found in the PSIER S16 earbuds, offer a favorable stiffness-to-weight ratio, allowing the membrane to start and stop moving quickly. This translates to better transient response, the ability to reproduce the sharp attack of a snare drum or the pluck of a guitar string without blurring the sound. Beryllium-coated diaphragms offer similar benefits at higher price points, while standard PET films remain the most common and cost-effective option.

The Physics of Air and Acoustics: Sound Waves in Your Ear

When the driver moves, it creates pressure waves. But these waves do not travel freely into your auditory system. They enter the ear canal, a tube approximately 25 to 35 millimeters long and 5 to 10 millimeters in diameter, which fundamentally shapes what you hear.

The ear canal is a resonator. Just as an organ pipe amplifies certain frequencies based on its length, your ear canal naturally boosts frequencies around 2700 to 3800 Hz. This is not a design flaw; it is an evolutionary feature. Human hearing is most sensitive in this range precisely because it corresponds to the frequencies most critical for understanding speech and detecting environmental threats.

This means that the acoustic seal between the ear tip and your ear canal is arguably the single most important factor in how your earbuds sound. A poor seal allows bass frequencies to leak out, resulting in thin, tinny audio. A proper seal creates a closed acoustic chamber that lets the driver's bass output build pressure inside the canal. This is why earbuds ship with multiple tip sizes, and why spending five minutes experimenting with different tips can transform your listening experience more than upgrading to a more expensive pair.

Foam tips compress during insertion and then expand to fill the ear canal, creating a more consistent seal than silicone tips for many users. Silicone tips offer easier insertion and removal but may not conform to irregularly shaped canals. The right choice is deeply personal and depends entirely on the geometry of your ears.

Psychoacoustics: How Your Brain Builds a 3D Sound World

Sound becomes immersive not when it reaches your eardrum, but when your brain interprets it. The field of psychoacoustics studies this interpretation, and it reveals something remarkable: your brain constructs the perception of three-dimensional space from surprisingly sparse acoustic cues.

Three primary mechanisms drive spatial hearing. Interaural Time Difference (ITD) refers to the fact that sound arriving from your left reaches your left ear approximately 0.6 milliseconds before your right ear. Your brain uses this microsecond-level timing to localize low-frequency sounds. Interaural Level Difference (ILD) captures the fact that your head blocks high frequencies more effectively than low ones, so a sound from your left is louder at your left ear. And spectral cues, created by the complex shape of your outer ear (pinna), help you distinguish whether a sound is in front of you or behind you, above you or below you.

These three cues combine into what acousticians call a Head-Related Transfer Function, or HRTF. An HRTF is essentially a mathematical fingerprint of how sound from every direction is filtered by your head, torso, and outer ear before reaching your eardrum. The critical insight: every person's HRTF is unique, as individual as a fingerprint, because no two heads, ears, or torsos are shaped exactly alike.

This is why generic spatial audio, the kind that Dolby Atmos or Apple Spatial Audio applies by default, works reasonably well for most people but never achieves the convincing externalization that real speakers provide. It is using an averaged HRTF rather than yours. Emerging research from institutions like the University of Illinois and the University of Washington demonstrates that earbuds can estimate personalized HRTFs using their built-in microphones, potentially enabling a future where spatial audio is calibrated to your specific anatomy.

The Complete Signal Chain: From Digital File to Brain

Understanding immersion requires seeing the entire journey at once. A compressed audio file sits on your phone. The processor reads it, and the Bluetooth chip encodes it using the negotiated codec. Radio waves carry it across a few centimeters of air. The earbud's chip decodes it back to digital audio. A digital signal processor applies equalization, bass enhancement, and spatial audio effects. A digital-to-analog converter transforms it into voltage. An amplifier boosts the signal. The voice coil moves, the diaphragm vibrates, and pressure waves enter your ear canal. The sealed chamber of your canal shapes the frequency response. Your eardrum vibrates, the ossicles amplify, the cochlea separates frequencies along its basilar membrane, and hair cells convert mechanical motion into neural impulses. Your auditory cortex receives these impulses and constructs the perception of a singer standing in front of you, a guitar to your left, drums behind.

Every step in this chain introduces coloration or loss. The codec discards data deemed perceptually redundant. The driver has a frequency response that emphasizes some frequencies over others. The ear canal resonance boosts the 3kHz region. Your individual HRTF filters the spatial information. And yet, somehow, the brain assembles a coherent, emotionally resonant experience from these compromised signals. This is the true miracle of wireless audio: not the technology itself, but the brain's extraordinary ability to extract meaning from approximation.

This is also why two earbuds with identical specifications can sound completely different. The specification sheet captures only a few points on this complex chain. It cannot capture the interaction between driver tuning and ear canal acoustics, or how a particular codec's psychoacoustic model interacts with your hearing sensitivity at different frequencies.

What This Means When You Press Play

The next time you put on wireless earbuds and close your eyes, consider what is happening. A digital file is being torn into packets, hurled through the air as radio waves, caught and reassembled, converted from numbers to voltage to movement to air pressure to neural electricity. Your brain is taking these fragmented signals and constructing an entire world of sound, placing instruments in space, detecting the emotional tone of a voice, feeling the physical impact of bass in your chest.

None of this was designed from scratch. Bluetooth builds on decades of radio engineering. Dynamic drivers descend from telephone technology invented in the nineteenth century. Psychoacoustics draws on research into how the human auditory system evolved to help our ancestors survive. What makes modern wireless earbuds remarkable is not any single innovation but the integration of all these disciplines into a device you can lose in a couch cushion.

The science of wireless audio is, at its core, a story about translation. Not perfect translation, because perfect translation does not exist in any domain. But good enough translation that your brain, the most sophisticated signal processor ever evolved, can fill in the gaps and construct an experience that feels, in the moment, indistinguishable from reality. And perhaps that is the most profound insight of all: immersion is not a property of the device. It is a collaboration between engineering and perception, between what the earbuds provide and what your brain completes. The earbuds do not create the sound you hear. They create the conditions under which your mind creates it.

PSIER S16 Wireless Earbuds

Related Essays

Mood Pie C9 True Wireless Earbuds: Your Daily Jamming Companion

TECKNET TK-HS008 Wireless Headphones: Your Ideal Choice for Music on the Go

The Invisible Force: How Personal Audio Devices Create Sound from Silence

The Invisible Freedom: When Technology Disappears Into Reliability

The Logic of the Affordable Miracle: Deconstructing Budget Audio Engineering

Kurdene S10 Wireless Earbuds: Experience Superior Sound with Bluetooth 5.2 Technology

Monster N-Lite 208 Wireless Headphones: Unleash Premium Sound with Advanced Bluetooth 5.3 Technology

Joysico YS3: Your Ultimate Workout Companion for Uninterrupted Audio Bliss

Unleash Your Inner Audiophile with the PIFFA A66 Bluetooth Headphones