The Invisible Compression: How Wireless Audio Codecs Preserve Sound Quality

Update on March 15, 2026, 5:53 p.m.

In 1982, the compact disc promised perfect sound forever. The shiny 12-centimeter platter could hold 74 minutes of digital audio, sampled 44,100 times per second with 16-bit precision—a mathematical representation so complete that the engineers at Philips and Sony declared it “inaudibly transparent.” Forty years later, we stream music through invisible radio waves to devices smaller than a quarter, and somehow, the quality is nearly indistinguishable.

This is not magic. It is the result of decades of work in information theory, digital signal processing, and a peculiar kind of engineering cleverness: the art of throwing away data without throwing away the experience.

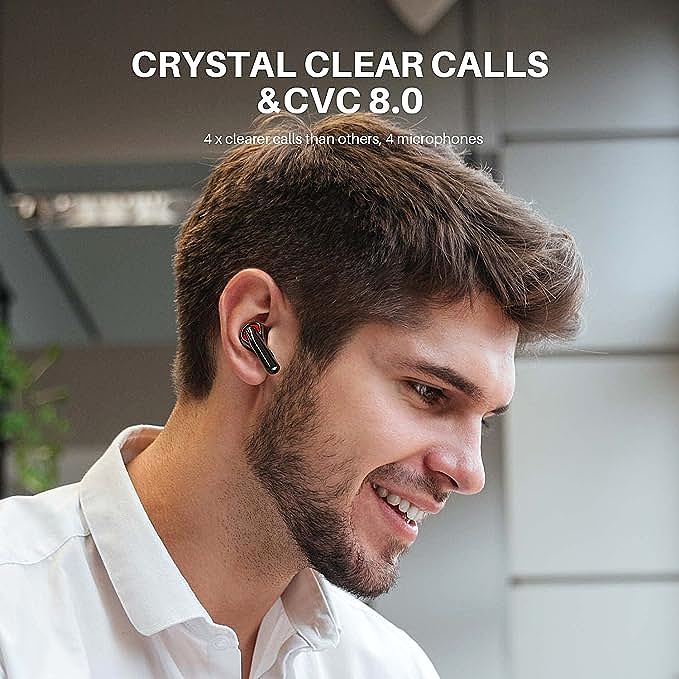

The modern wireless earbud—from budget-friendly options like the Tribit FlyBuds C1 to premium audiophile gear—relies on a hidden technology that most consumers never consider: the audio codec. These software algorithms compress, transmit, and reconstruct sound in real time, navigating the treacherous waters between data efficiency and auditory fidelity. Understanding how they work reveals not just the engineering of wireless audio, but a deeper truth about what we actually hear when we listen to music.

Shannon’s Gift: Why 352 Kilobits Can Sound Like 1,400

In 1948, Claude Shannon published “A Mathematical Theory of Communication,” a paper so foundational that it created an entire field of study. Among its many insights was a deceptively simple question: What is the minimum amount of data required to transmit a message without losing information?

Shannon’s answer—the entropy of the message—became the theoretical foundation for all data compression. But Shannon also revealed an uncomfortable truth: most messages contain redundancy. English text, for instance, can be compressed by about 50% without losing any meaning because of predictable patterns in letter combinations. The letter “u” almost always follows “q.” The word “the” appears more frequently than “quintessential.”

Audio is similarly redundant, but in ways that are far more subtle.

A raw, uncompressed CD-quality audio stream requires 1,411 kilobits per second (kbps): 44,100 samples per second × 16 bits per sample × 2 channels. Bluetooth’s original maximum bandwidth, however, was a fraction of that. The solution wasn’t faster transmission—it was smarter compression.

The aptX codec, developed by Qualcomm’s predecessors in the late 1980s, achieves something that seems to violate Shannon’s law. It transmits audio at 352 kbps—one-quarter the data rate of CD audio—yet most listeners cannot distinguish it from the original. The trick lies in understanding that Shannon’s theory applies to information, not perception.

What we hear is not the same as what exists.

48,000 Times Per Second: The Nyquist Numbers That Define Digital Audio

Before understanding compression, we must understand sampling. The Nyquist-Shannon sampling theorem, published in various forms between 1928 and 1949, states a fundamental limit: to digitally capture a signal without distortion, you must sample at least twice as fast as its highest frequency component.

Human hearing spans roughly 20 Hz to 20,000 Hz. To capture this range without introducing artifacts called “aliasing,” digital audio must sample at least 40,000 times per second. The CD standard chose 44,100 Hz—a comfortable margin that also happened to align with video recording equipment of the era.

The aptX codec supports sampling rates up to 48,000 Hz (48 kHz), slightly exceeding CD quality. But the sampling rate only tells part of the story. Each sample captures the amplitude of the sound wave at an instant in time. The question is: how much data do we need to describe that amplitude?

CD audio uses 16 bits per sample, offering 65,536 possible amplitude values. This provides a dynamic range of about 96 decibels—roughly the difference between a pin dropping and a jet engine at takeoff. The aptX codec maintains this 16-bit depth, preserving the full dynamic range while reducing the data rate through a different mechanism entirely.

Sampling and bit depth define the resolution of digital audio. Compression defines how efficiently we transmit it.

Predicting the Future: How Audio Codecs Guess What Comes Next

The secret to aptX’s efficiency lies in a technique called Adaptive Differential Pulse-Code Modulation, or ADPCM. It sounds intimidating, but the principle is surprisingly intuitive.

Standard Pulse-Code Modulation (PCM)—the format used on CDs—transmits the absolute value of each audio sample. If a sound wave is at amplitude 32,767 (near maximum), the system transmits 32,767. If the next sample is 32,765, it transmits that number in full.

ADPCM asks: instead of transmitting absolute values, why not transmit the difference between samples?

Sound waves rarely jump wildly from one extreme to another. A violin string vibrating at 440 Hz (the note A above middle C) moves smoothly, predictably. Consecutive samples are often nearly identical. The difference between them might be just a few values—tiny numbers that require far fewer bits to transmit.

But ADPCM goes further. It doesn’t just transmit differences—it predicts what the next sample will be and transmits the error in its prediction.

The codec maintains a model of the audio signal. Based on previous samples, it predicts what the next sample should be. It then compares this prediction to the actual sample and transmits only the prediction error. When the prediction is good—as it often is for predictable sounds like sustained musical tones—the error is tiny. When the prediction fails, the codec adapts, adjusting its model for better future predictions.

This adaptive prediction is why aptX can achieve near-CD quality at one-quarter the data rate. It’s not throwing away information randomly—it’s exploiting the predictable structure of sound itself.

The Psychoacoustic Edge: What We Don’t Hear, We Don’t Need

Some codecs go further than ADPCM. They exploit not just the structure of sound, but the structure of human hearing.

Psychoacoustic models identify sounds that the human ear cannot perceive. A loud sound at 1,000 Hz will mask quieter sounds at nearby frequencies—the auditory system is too busy processing the dominant tone to notice the subtle ones. By identifying and discarding these masked sounds, codecs like AAC (used by Apple) and MP3 can achieve even higher compression ratios.

The aptX codec takes a different philosophical approach. Rather than deciding what the listener can and cannot hear, it preserves the full audio signal using efficient mathematical representation. This makes it more “transparent”—closer to the original—than psychoacoustic codecs in some listening tests, though it requires a higher bitrate.

The trade-off is fundamental: efficiency versus transparency. Different codecs make different choices, and those choices reflect different theories about what matters in audio reproduction.

The Trade-Off Triangle: Quality, Latency, and Power

All audio codecs navigate a three-way trade-off between sound quality, transmission latency, and power consumption. Improving one typically degrades the others.

Quality: Higher bitrates preserve more detail but require more bandwidth and power. The LDAC codec, developed by Sony, can transmit at up to 990 kbps—approaching the bitrate of uncompressed audio—but demands significant processing power and a strong wireless connection.

Latency: Lower latency reduces the delay between sound leaving the source and reaching the ear. This matters for video (lip-sync issues) and gaming (reaction time). But low-latency modes often sacrifice quality or increase power consumption. The aptX Adaptive codec dynamically adjusts between quality and latency based on content type.

Power: Lower power consumption extends battery life—critical for wireless earbuds with tiny batteries. But power-efficient codecs often use simpler algorithms that may not sound as good as more computationally intensive alternatives.

Bluetooth 5.2, the wireless standard found in contemporary earbuds, improves all three dimensions through its Low Energy Audio protocols and the LC3 codec. The LC3 codec achieves better quality at lower bitrates than the older SBC codec, while consuming less power. A device using LC3 at 160 kbps can sound as good as SBC at 320 kbps—at half the power cost.

This is why modern wireless audio devices can offer both good sound quality and long battery life. The engineering has become clever enough to have it both ways.

What “CD Quality” Actually Means in 2026

The phrase “CD-quality audio” has become a marketing shorthand, but its meaning is more nuanced than many realize.

Strictly speaking, CD quality refers to 16-bit, 44.1 kHz audio—a specific technical specification. But human hearing has limits. Controlled studies have repeatedly shown that most listeners—even self-described audiophiles—cannot reliably distinguish between 16-bit/44.1 kHz audio and higher-resolution formats like 24-bit/96 kHz in blind tests.

The real question is whether wireless codecs like aptX can match this perceptual threshold.

Independent testing by audio publications suggests they can. The aptX codec at 352 kbps scores similarly to uncompressed audio in double-blind listening tests, with most listeners unable to identify which is which. The differences that exist—slightly reduced treble extension, marginally altered stereo imaging—are subtle enough that they require trained ears and high-quality playback equipment to detect.

For the vast majority of listeners, in the vast majority of environments—commuting, exercising, working—the gap between wireless audio and CD quality has become perceptually irrelevant.

The Engineering Philosophy of Invisible Compression

There is a deeper lesson in the development of audio codecs, one that extends beyond sound.

The aptX codec, and its siblings in the Bluetooth audio ecosystem, represent a particular kind of engineering wisdom: the art of being good enough. Not perfect—never perfect—but good enough that the imperfections fall below the threshold of perception.

This is different from the engineering philosophy of the CD era, which aimed for mathematical transparency. The CD specification was designed to exceed human hearing capabilities in every dimension. It was an exercise in over-engineering, in building a system so capable that its limitations would never be the bottleneck.

Wireless audio codecs take the opposite approach. They aim precisely at the bottleneck. They ask: What is the minimum data required to transmit sound that humans cannot distinguish from the original? They then build systems that approach that minimum from above, trimming every possible bit while staying just on the right side of perceptibility.

Both philosophies have their place. The CD approach gives us confidence—the knowledge that the medium is not the limitation. The codec approach gives us efficiency—the ability to transmit high-quality audio through channels that would have seemed impossibly narrow just decades ago.

Perhaps the most remarkable thing about modern wireless audio is not that it works, but that it works so well that we have stopped noticing the engineering miracle taking place in our ears. The compression is invisible because it has been designed to be invisible. The data loss is imperceptible because the algorithms have learned what we perceive and what we do not.

The next time you hear “CD-quality audio” in a marketing claim, you’ll know the question isn’t whether it’s possible—but whether you can actually hear the difference.

The technology behind wireless audio codecs continues to evolve. Bluetooth’s LE Audio standard, finalized in 2020, promises further improvements in efficiency and quality. The future of wireless audio is not louder or clearer—it’s more invisible.