Acoustic Fortresses: Engineering Auditory Isolation in High-Decibel Environments

Update on March 4, 2026, 7:23 p.m.

The modern world is an aggressively loud biome. From the relentless, low-frequency hum of industrial HVAC systems to the percussive, high-amplitude impacts of construction sites, human auditory systems are constantly subjected to stimuli they were never evolutionarily designed to withstand. The intersection of occupational safety and consumer audio technology has birthed a unique category of devices designed to act as acoustic gatekeepers. These hybrid systems must simultaneously block destructive energy waves while selectively permitting critical communication and entertainment data to pass through.

To fully comprehend the sophisticated engineering required to achieve this delicate balance, we must deconstruct the physics of sound, the biological vulnerabilities of the human ear, the material science of acoustic dampening, and the complex algorithms governing modern digital signal processing.

Are We Slowly Deafening Ourselves Without Knowing It?

To understand the necessity of acoustic isolation, we must first examine the invisible physics of sound and how it interacts with human biology. Sound is not a physical object; it is a mechanical wave—a rapid fluctuation in atmospheric pressure propagating through a medium, typically air. These waves consist of alternating bands of high pressure (compression) and low pressure (rarefaction).

When these pressure waves enter the human ear canal, they strike the tympanic membrane (eardrum), a delicate, cone-shaped structure that vibrates in exact synchronization with the atmospheric pressure changes. These micro-vibrations are transferred through the ossicles—three of the smallest bones in the human body (the malleus, incus, and stapes)—which act as a mechanical lever system, amplifying the force of the vibrations before transmitting them into the fluid-filled cochlea.

Inside the cochlea lies the Organ of Corti, the true biological marvel of hearing. It contains thousands of microscopic sensory structures known as stereocilia, or hair cells. As the fluid inside the cochlea ripples, it bends these hair cells. The bending motion opens ion channels, triggering an electrochemical signal that travels via the auditory nerve to the brain, where it is interpreted as sound.

The critical vulnerability in this system is that these hair cells do not regenerate in humans. When subjected to excessive mechanical force—which correlates to the amplitude, or volume, of the sound wave—these delicate structures can be bent too far, snapping or becoming permanently metabolically exhausted. This results in Sensorineural Hearing Loss (SNHL), specifically Noise-Induced Hearing Loss (NIHL).

The danger is severely compounded by the mathematical reality of how we measure sound intensity: the decibel (dB). The decibel scale is base-10 logarithmic, not linear. An increase of a mere 3 dB represents a doubling of acoustic energy. However, because human perception of loudness is also logarithmic, it takes an increase of approximately 10 dB for a sound to be perceived as “twice as loud.” This creates a dangerous psychological trap. A machine operating at 90 dB has ten times the acoustic energy of a machine at 80 dB, and one hundred times the energy of a machine at 70 dB. Workers frequently underestimate the destructive power of their environment because their subjective perception of “loudness” fails to grasp the exponential increase in mechanical force violently impacting their inner ear. This biological blind spot necessitates rigorous, scientifically validated intervention.

The Acoustic Moat Around Your Eardrum

Defending the delicate stereocilia against this invisible bombardment requires the construction of a physical barrier capable of dissipating acoustic energy before it reaches the tympanic membrane. In the realm of high-decibel industrial environments, the primary defense mechanism is passive acoustic attenuation.

Passive attenuation relies entirely on material science and acoustic impedance. When a sound wave encounters a boundary between two different materials—such as the transition from air to a dense polymer plug—a portion of the wave is reflected, and a portion is absorbed. The goal of an effective earplug is to maximize absorption and minimize transmission.

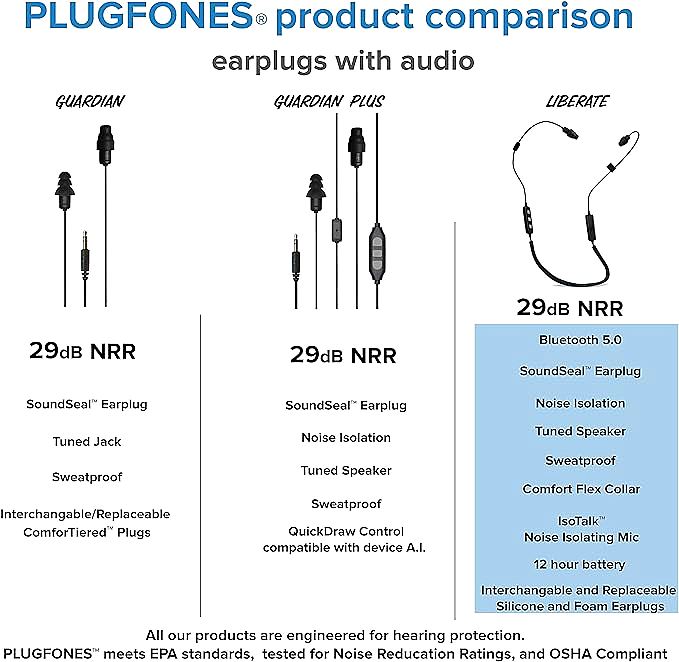

Devices engineered for occupational environments, such as the Plugfones Liberate 2.0, utilize specific polymers to achieve this. The two most prominent materials are viscoelastic polyurethane foam (memory foam) and silicone elastomers.

Polyurethane foam is highly effective due to its cellular structure. As a sound wave enters the foam, the air pressure forces the structural struts of the polymer matrix to bend and flex. This mechanical deformation converts the kinetic energy of the sound wave into microscopic amounts of thermal energy (heat), which harmlessly dissipates. Because it is viscoelastic, it slowly expands after being compressed, allowing it to perfectly conform to the highly irregular, highly individualized topography of the human ear canal, creating a tight, custom acoustic seal.

Silicone elastomers, on the other hand, rely on density and structural geometry. They are often molded into tiered flanges (like the ComforTiered tips). As the sound wave attempts to pass through, it must navigate multiple dense barriers. The acoustic impedance mismatch between the air and the dense silicone reflects much of the energy back out of the ear canal.

The efficacy of these barriers is standardized through a metric known as the Noise Reduction Rating (NRR). Calculated under strict laboratory conditions mandated by the American National Standards Institute (ANSI), the NRR provides a theoretical baseline for attenuation. For example, a device yielding an NRR of 29 dB with foam tips suggests that, under perfect conditions, a 100 dB environmental noise could be reduced to 71 dB at the eardrum. This transformation shifts the exposure from a level that causes rapid, permanent damage to a level that is biologically sustainable for extended periods, fulfilling the fundamental requirement of occupational hearing conservation.

Why Silence Requires a Perfect Physical Blockade

A common misconception in modern consumer audio is the belief that Active Noise Cancellation (ANC) technology renders physical earplugs obsolete. While ANC is a brilliant application of wave interference—using external microphones to sample ambient noise and projecting an exact anti-phase waveform to destructively cancel the original sound—it possesses severe limitations in industrial settings.

ANC systems excel at neutralizing low-frequency, continuous, stationary noise, such as the hum of an airplane engine. However, they suffer from inherent computational latency. In the fraction of a millisecond it takes for the microphone to detect a sound, the digital signal processor to analyze it, and the speaker driver to generate the anti-wave, a sudden, high-amplitude transient noise—like a hammer striking steel or a pneumatic nail gun firing—has already bypassed the driver and struck the eardrum. Furthermore, ANC systems are constrained by the physical limits of their speaker drivers; they cannot generate an anti-wave powerful enough to cancel a 110 dB impact noise without destroying their own components.

Therefore, true auditory isolation in hazardous environments strictly requires a perfect physical blockade. This is why organizations like the Occupational Safety and Health Administration (OSHA) mandate specific, certified passive attenuation ratings for protective equipment. To be deemed “OSHA compliant,” a hybrid audio device cannot simply rely on software tricks; it must function primarily as an ANSI-certified physical earplug.

Furthermore, a physical blockade solves the issue of internal audio volume. In a loud environment without passive isolation, a listener must crank the volume of their media to dangerous levels just to overcome the background noise (a phenomenon known as the Lombard effect, applied to device usage). By establishing a physical acoustic moat that drops the background noise by 29 dB, the internal speaker only needs to output audio at a safe, low volume (e.g., 60 dB) to be perceived clearly. The physical seal breaks the vicious cycle of competing with the environment through ever-increasing, damaging volumes.

Bandwidth vs. Battery: The Wireless Audio Dilemma

Transitioning these protective acoustic fortresses into connected, intelligent devices introduces a complex engineering conflict: the brutal tradeoff between wireless transmission bandwidth, computational processing power, and chemical battery density.

In heavy industry or active outdoor environments, physical tethers (cables) are unacceptable safety hazards. They can become entangled in rotating machinery or snag on structural elements. Therefore, communication must be routed through Radio Frequency (RF) protocols, predominantly Bluetooth. Bluetooth operates in the globally unlicensed 2.4 GHz Industrial, Scientific, and Medical (ISM) band. To maintain a stable connection amidst the electromagnetic chaos generated by heavy machinery and industrial motors, the protocol utilizes Frequency Hopping Spread Spectrum (FHSS), rapidly switching carrier frequencies hundreds of times per second.

Maintaining this RF link, decoding complex audio codecs (like SBC or AAC), and driving the micro-acoustic transducers (speakers) requires continuous electrical current. This brings us to the fundamental limitation of portable electronics: the lithium-ion polymer (LiPo) cell.

Chemical energy storage is dictated by strict volumetric constraints. You cannot cheat the laws of chemistry; a specific volume of lithium, cobalt, and graphite can only store a specific number of electrons. In a wearable device, weight and physical footprint must be minimized to prevent ergonomic fatigue.

Achieving extended operational lifespans—such as the 12-hour continuous playback rating found in systems like the Plugfones NeverOut battery architecture—requires ruthless power management. Engineers must utilize ultra-low-power System-on-Chip (SoC) architectures. These chips aggressively gate their own power consumption, shutting down specific processing blocks at the microsecond level when they are not actively required. The entire system is an exercise in extreme electrical frugality, balancing the necessary voltage to push air molecules through the speaker against the hard limits of the battery’s chemical capacity, ensuring the device survives an entire grueling twelve-hour industrial shift without demanding a recharge.

When the Jackhammer Meets the Conference Call

While isolating the user’s eardrum from the environment is a matter of passive physical barriers, isolating the user’s voice for outbound communication requires highly aggressive, algorithmic intervention.

Consider the acoustic reality of placing a phone call while operating heavy machinery. The microphone capsule embedded in the device’s control pendant is entirely agnostic; it blindly converts all incoming air pressure variations into electrical voltage. In this scenario, the amplitude of the environmental noise (the jackhammer) is exponentially higher than the amplitude of the human voice originating from the user’s mouth a few inches away. If this raw analog signal were transmitted directly to the cellular network, the recipient on the other end of the line would hear nothing but a deafening, distorted roar.

To resolve this, the raw audio data is routed through a dedicated Digital Signal Processor (DSP) before transmission. This requires real-time computational analysis of the audio stream using techniques such as the Fast Fourier Transform (FFT). The FFT algorithm takes the incoming time-domain waveform and slices it into the frequency domain, separating the sound into its constituent pitches, much like a prism separates white light into a rainbow.

Once in the frequency domain, advanced algorithms—such as those driving the IsoTalk noise-isolating microphone technology—go to work. Human speech has a very specific, evolutionary acoustic fingerprint. The fundamental frequencies of a voice, combined with the resonant formants created by the vocal tract, produce predictable mathematical patterns. Industrial noise, wind shear, and engine rumble possess entirely different spectral characteristics; they are often broad-spectrum, stationary (constant over time), or localized to extreme low frequencies.

The DSP applies a process known as Spectral Subtraction. It continuously monitors the background noise during the microscopic pauses between the user’s words. It builds a statistical profile of the unwanted noise, and then, in real-time, mathematically subtracts that specific energy profile from the total audio signal. Furthermore, it employs Voice Activity Detection (VAD) algorithms to aggressively mute the microphone channel the instant the user stops speaking, preventing the environmental roar from bleeding through the connection. The result is an auditory illusion for the remote listener: they hear a clean, isolated vocal track, completely oblivious to the chaotic, high-decibel warzone surrounding the speaker.

Breaking the Seal: The Anatomy of Auditory Leaks

Even the most sophisticated acoustic engineering and flawless DSP algorithms are rendered entirely useless by a single, fundamental failure mode: the breach of the physical acoustic seal. The effectiveness of passive isolation is remarkably fragile, and understanding how it fails is critical for occupational safety.

The NRR printed on a box represents an ideal laboratory scenario. In the real world, the actual attenuation achieved is often drastically lower, a reality acknowledged by safety agencies through “derating” formulas. The primary vector for failure is the human operator. Proper insertion of a viscoelastic foam plug requires rolling the foam into a tight cylinder, reaching over the head to pull the pinna (outer ear) up and back to straighten the ear canal, inserting the plug deeply, and holding it in place while the foam expands. Failure to execute any of these steps results in an incomplete seal.

When a seal is incomplete, it creates an acoustic leak. Due to the physics of wave diffraction, low-frequency sound waves (which have very long wavelengths) are particularly adept at bending around obstacles and squeezing through microscopic gaps between the earplug and the skin of the ear canal. Even a gap of a fraction of a millimeter can allow massive amounts of low-frequency rumble from a diesel engine to bypass the plug entirely, striking the eardrum with undiminished force.

Furthermore, the materials themselves are subjected to severe environmental degradation. Industrial environments are hostile. The human body produces sweat, which is a corrosive saline solution containing lactic acid and urea. Ear canals produce cerumen (earwax). Over weeks of use, these biological fluids penetrate the cellular structure of polyurethane foam or coat the surface of silicone elastomers.

This exposure fundamentally alters the mechanical properties of the polymers. The plasticizers in the foam may leach out, causing the material to lose its viscoelastic memory and become hard and brittle, rendering it incapable of conforming to the ear canal. Silicone can micro-tear or lose its elastic rebound. Once the material science of the plug breaks down, its acoustic impedance shifts, its ability to maintain a pressure seal vanishes, and the user is unknowingly exposed to dangerous decibel levels, believing they are protected simply because a physical object is present in their ear.

Navigating the Future of Occupational Hearing Conservation

The convergence of certified physical protection with wireless communication capabilities represents a major paradigm shift in occupational safety. Historically, hearing protection was viewed purely as a subtractive measure—a mandatory discomfort that isolated workers not only from danger but also from their colleagues, situational awareness, and any form of auditory stimulation.

The modern hybrid approach acknowledges human psychology. Workers are far more likely to strictly adhere to hearing conservation protocols if the protective equipment also provides a net positive benefit to their daily experience, such as the ability to listen to a podcast during a monotonous shift or seamlessly answer a phone call without removing their gloves and their earplugs in a hazardous zone.

Looking forward, the integration of acoustics and microelectronics will deepen. We are approaching an era of intelligent, data-driven Personal Protective Equipment (PPE). Future iterations of in-ear devices will likely incorporate internal dosimetry microphones. Rather than relying on theoretical NRR calculations or static environmental measurements, these microscopic sensors, positioned inside the ear canal between the plug and the eardrum, will measure the exact, real-time acoustic dose striking the tympanic membrane.

If an earplug loses its seal or the environmental noise surpasses safe limits despite the attenuation, the device will instantly alert the user via an auditory prompt or log the exposure data to a centralized safety management system via Bluetooth. This transition from passive, assumed protection to active, verified, and personalized acoustic monitoring will close the final loop in occupational hearing conservation. The technology will no longer just build a fortress; it will actively patrol the walls, ensuring that the invisible, destructive waves of the modern world remain safely outside.